What's New in Jumper — March 2026

We’ve shipped a substantial update with new features across AI integration, search, export, and workflow improvements. Here’s what’s new.

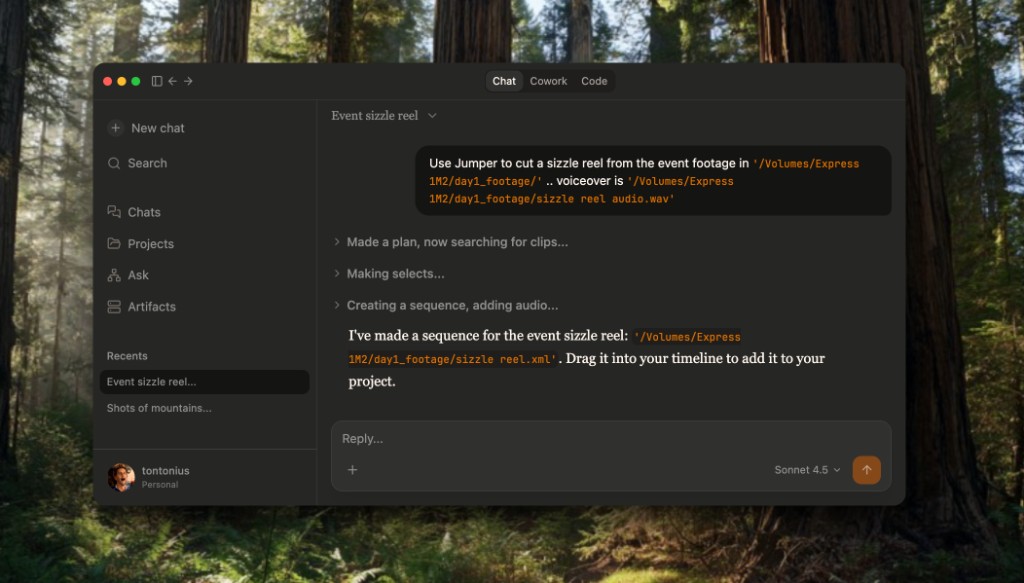

AI Agent support

Jumper can now be controlled by AI assistants like Claude or Codex. Agents can search your footage, find clips, export sequences, and orchestrate multi-step workflows, all through natural language. If you haven’t tried it yet, see our agentic editing guide and the blog post on agentic editing.

Faster, more accurate speech model for English

We’ve added a new speech model for English that is both significantly more accurate. The new model has lower Word Error Rate (WER) and better timestamping. Not only that, it’s also significantly faster at transcribing audio. As an example, it delivers frame-accurate transcription of a 2+ hour podcast in about 1.5 minutes.

As a result of this change, Jumper now only ship with this new model for English. If you are using a different language, you can still download the old model.

Public API

We’ve added a local Public API for third-party integrations. Build custom tools, automate workflows, or connect Jumper to your existing pipeline. Full details are in the API reference.

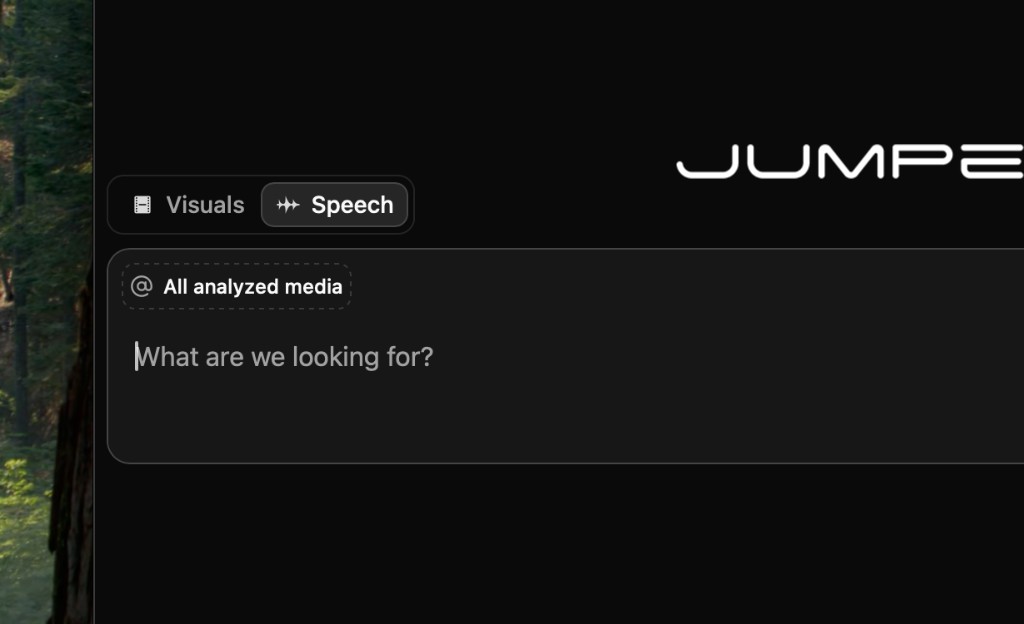

Global speech searches

Like you could already do for visual searches, you can now run speech searches across all analyzed media, not just the media in your current project. Toggle the setting and search your entire library at once.

CSV export for search results

In Standalone mode, you can now copy the search results to your clipboard. The clipboard format is CSV with one row per search result. Useful for logging, reporting, or feeding results into other tools.

Drag-and-drop for adding media

On macOS and Windows, you can now add media to the standalone app by dragging and dropping files directly into the window.

As always, we’d love to hear your feedback. Join us on Discord to share ideas and get the latest updates.