Agentic Editing Guide

AI Agent Video Editing with MCP for Claude and Codex

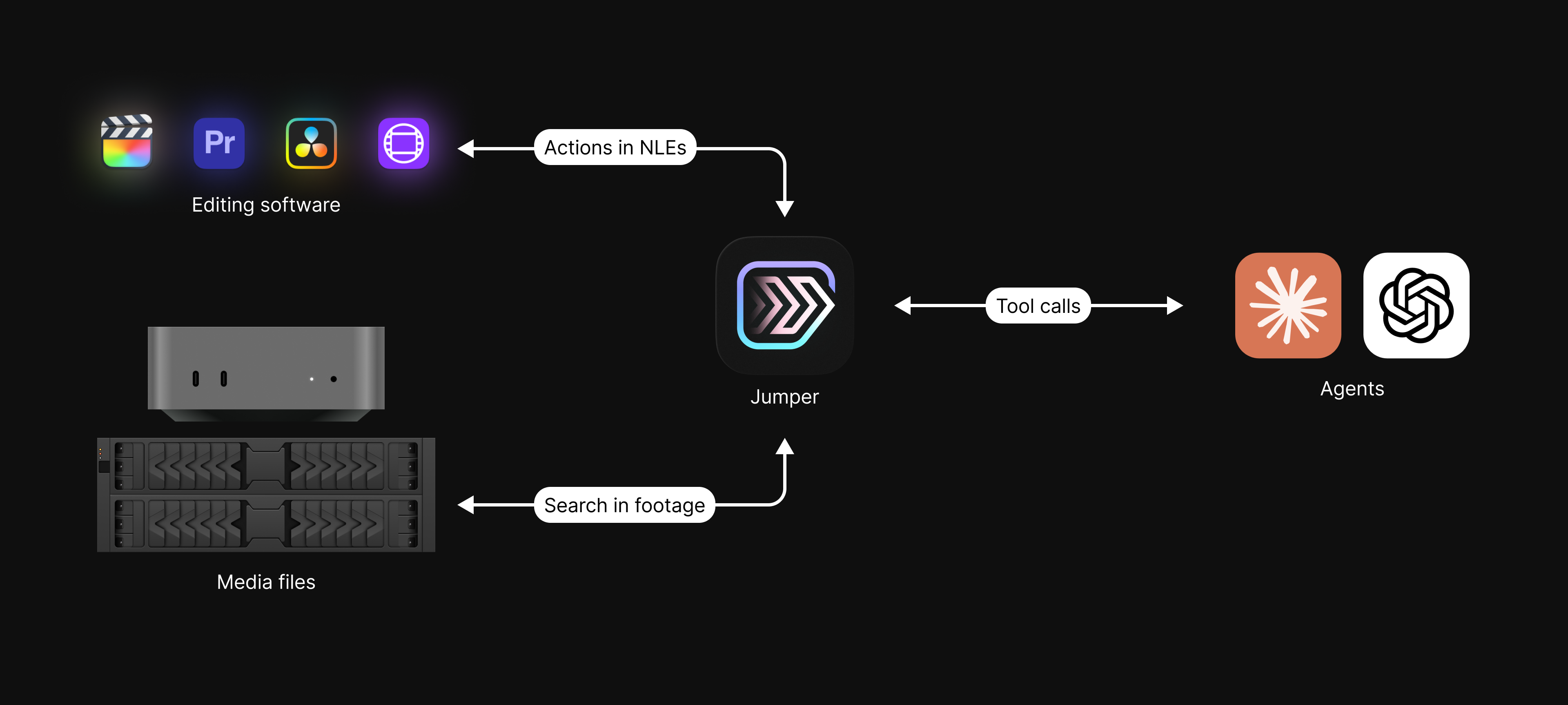

Jumper enables an MCP server-compatible workflow for AI agent integration in professional video editing. It uses the Model Context Protocol (MCP) so agents can help with repeatable tasks across your NLE workflow in Adobe Premiere Pro, DaVinci Resolve, Final Cut Pro, and Avid Media Composer.

At a glance

- Claude and OpenAI Codex (or any other MCP-compatible agent) can connect to Jumper

- Agents can perform visual search, transcript search, face-based search, and exports

- Through Jumper, agents can interact with Premiere, Resolve, Final Cut Pro, and Avid

- Media stays local on your machine and not uploaded to the cloud

What is agentic editing?

Agentic editing means assigning structured editing tasks to an AI agent, then letting it execute multi-step workflows. Instead of manually repeating the same searches and clip pulls, you can ask an agent to find selects, group moments, and prepare timeline-ready outputs.

Using AI agents for video editing tasks can speed up your editing workflow by automating timeconsuming and repetitive tasks. Since agents can work asynchronously, you can let the agent work on a task in the background while you focus on more creative tasks.

MCP server for video editing

MCP (Model Context Protocol) is the interface pattern that lets tools expose capabilities to AI agents. Jumper comes with an MCP server, which means AI agents can call Jumper and leverage its search capabilities and integrations with NLEs.

The Agents interact with the Jumper MCP server and Jumper executes the requested actions, like visual search for "man in a red shirt" or "@Hanna smiling" or searching through transcripts for words or phrases.

Terminology

- AI agent: Claude/Codex or any other MCP-compatible agent

- Agentic editing: prompt-driven execution of editing workflows

- MCP server: a local server that exposes a set of actions through Model Context Protocol

Compatibility

We've included one-click-connect for Claude Desktop, Claude Code and OpenAI Codex Desktop. Since Jumper ships with an MCP server for video editing, it can be used with any MCP-compatible agent, although this is not something we have tested extensively.

| Category | Supported |

|---|---|

| AI Agents | Claude Desktop, Claude Code, OpenAI Codex Desktop |

| NLE Integrations | Premiere Premiere Resolve Resolve FCP FCP Avid Avid |

| Operating Systems | macOS, Windows |

Connecting your AI agent with Jumper

Connecting your AI agent with Jumper is as simple as a single click.

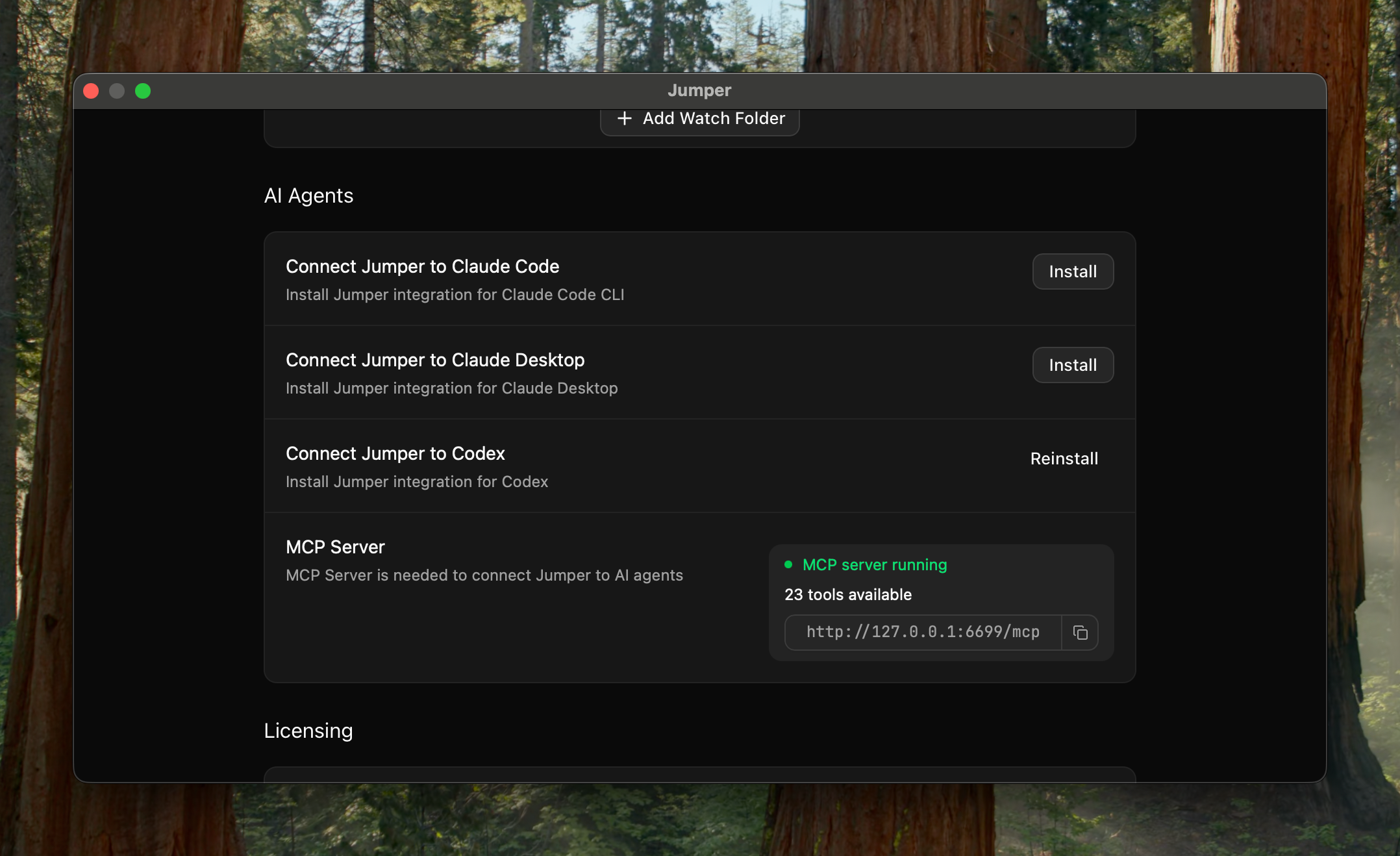

Open the Jumper Settings tab and scroll down to the "AI Agents" section and click the "Install" button for your preferred agent.

If you want to manually configure the MCP connection (e.g you want to use another agent harness), you can copy the MCP URL from the Settings tab and paste it into your agent's configuration.

Once installed, you can start using your agent with Jumper. To test whether the connection is working, simply just ask your agent "can you use Jumper?" and it will tell you if it's connected.

Real workflows

Here are some real-world editing workflows that can be sped up with Jumper and AI agents.

Documentary Filmmaking

- Pull B-roll matching a script or narrative theme

- Find and organize all moments featuring a specific character or interviewee

- Generate selects reels from transcript search (emotion, keywords, topics)

- Export rough-cut sequences for review based on agentic prompts

- Speed up stringouts and assembly edits using AI-guided clip selection

Reality TV & Unscripted

- Identify and extract all confessionals, sound-ups, and reaction moments for easy review

- Compile confrontation scenes or dramatic interactions between cast members

- Organize clips by cast member or storyline to support character-driven story arcs

- Pull B-roll of locations, entrances, and group shots

- Generate selects based on commonly needed moments: eliminations, reveals, group challenges, or emotional highs/lows

Corporate & Commercial

- Assemble highlight reels from customer testimonials or product shots for social campaigns

- Search for and organize footage of specific executives, teams, or branded locations

- Generate selects of on-message soundbites or presentation moments using transcript and brand keyword search

- Pull B-roll that matches campaign storylines, product launches, or event coverage requirements

Customize with skills

Skills are how you encode repeatable editing workflows for the agent. Each skill is a SKILL.md file that explains in plain text how the workflow should be used, what steps it runs, and how

results should be returned.

The skills concept allows you to tweak and shape agent workflows to match your preferences. You can also add new skills for jobs your team repeats often, like social cutdowns, B-roll sequences, interview selects, and keep that logic out of the general conversation.

Privacy

Will my footage be uploaded to AI companies?

No. The agent talks to Jumper via MCP, sending commands like "search for shots of Anna smiling" or "export clips to this folder." Jumper runs a local server on your machine. All the heavy work (visual search, transcription, face recognition) happens on your computer. Your footage never leaves it.

What the agent receives back is metadata only: file paths, timecodes, transcript excerpts, search result lists. The agent orchestrates the workflow; Jumper does the actual analysis locally and returns pointers to where things are, not the footage itself.